The Department of Defense just handed Anthropic a label that no AI company wants on its resume. By flagging the startup as a supply-chain risk, the Pentagon has effectively built a wall between one of the world's most prominent AI labs and the massive federal contracts it needs to scale. Anthropic isn't taking this sitting down. The company is preparing a legal challenge that could redefine how the United States treats private tech firms in the name of national security.

This isn't just about one company. It's a signal. The Pentagon is signaling that "Safety First" branding doesn't buy you a free pass on the security checklist. Anthropic has spent years marketing itself as the ethical, safe alternative to OpenAI. Now, that same safety-focused architecture is under a microscope, and the government doesn't like everything it sees. If you liked this article, you should check out: this related article.

The Pentagon Supply Chain Risk Problem

When the Pentagon flags a firm as a supply-chain risk, it isn't just a suggestion. It's a roadblock. It means the Department of Defense believes that using Anthropic's technology—specifically the Claude models—could expose the U.S. military to foreign interference or technical vulnerabilities. This usually happens when the government finds evidence of foreign investment or dependencies on hardware and software from regions deemed adversarial.

Anthropic's ownership structure has been a point of debate for a while. While they've taken billions from Amazon and Google, the early funding rounds and the complex "Benefit Corporation" structure create a layer of opacity that the Pentagon's procurement officers clearly find problematic. You can't just promise you're the good guys. You have to prove that every line of code and every dollar in the bank is beyond reproach. For another perspective on this event, refer to the latest update from TechCrunch.

The timing is brutal. Anthropic just launched its most capable models to date, aiming directly at the enterprise and government markets. Getting blacklisted by the largest buyer of technology in the world is a gut punch to their valuation and their long-term roadmap.

Anthropic Plans Legal Challenge to Clear Its Name

The legal strategy here is simple. Anthropic has to prove the Pentagon's assessment was arbitrary or based on outdated data. If they don't fight this, the "risk" label becomes a permanent stain. Other agencies like the Department of Justice or the FBI often follow the Pentagon's lead on vendor white-lists.

Anthropic's lawyers are likely looking at the Administrative Procedure Act. This law lets companies challenge federal agency decisions if they're "arbitrary, capricious, or an abuse of discretion." The company's argument is probably going to lean on their transparency. They'll say they've shared more about their model internals and safety protocols than almost any other AI lab.

It's a risky move. Suing the hand you want to feed you is rarely the first choice. But Anthropic is backed into a corner. If they can't sell to the U.S. government, they lose a massive revenue stream and the "gold standard" seal of approval that military contracts provide to commercial customers.

Why Safety Labels Aren't Enough for the Military

You've heard the talk about AI safety. Anthropic talks about it more than anyone. But the Pentagon doesn't care about "Constitutional AI" in the way a researcher does. The military cares about uptime, data exfiltration, and whether a model can be "poisoned" by a foreign state.

The supply chain for AI isn't just about where the chips come from. It's about the data used for training and the engineers who have access to the weights. If the Pentagon suspects that any part of Claude's development was touched by entities with ties to China or Russia, the "safety" of the output becomes irrelevant. A safe model that leaks secrets is still a failure.

The Real Impact on the AI Market

If Anthropic loses this fight, it changes the map for every other AI startup. It tells the market that being a domestic U.S. company isn't enough. You need total transparency into your cap table and your data sources.

OpenAI and Microsoft are watching this closely. They have their own government-grade clouds, like Azure Government, which are built to meet these exact security standards. Anthropic is trying to play in that same league without the decades of federal compliance experience that Microsoft brings to the table. It's a hard lesson in the difference between being a "tech darling" and being a "defense contractor."

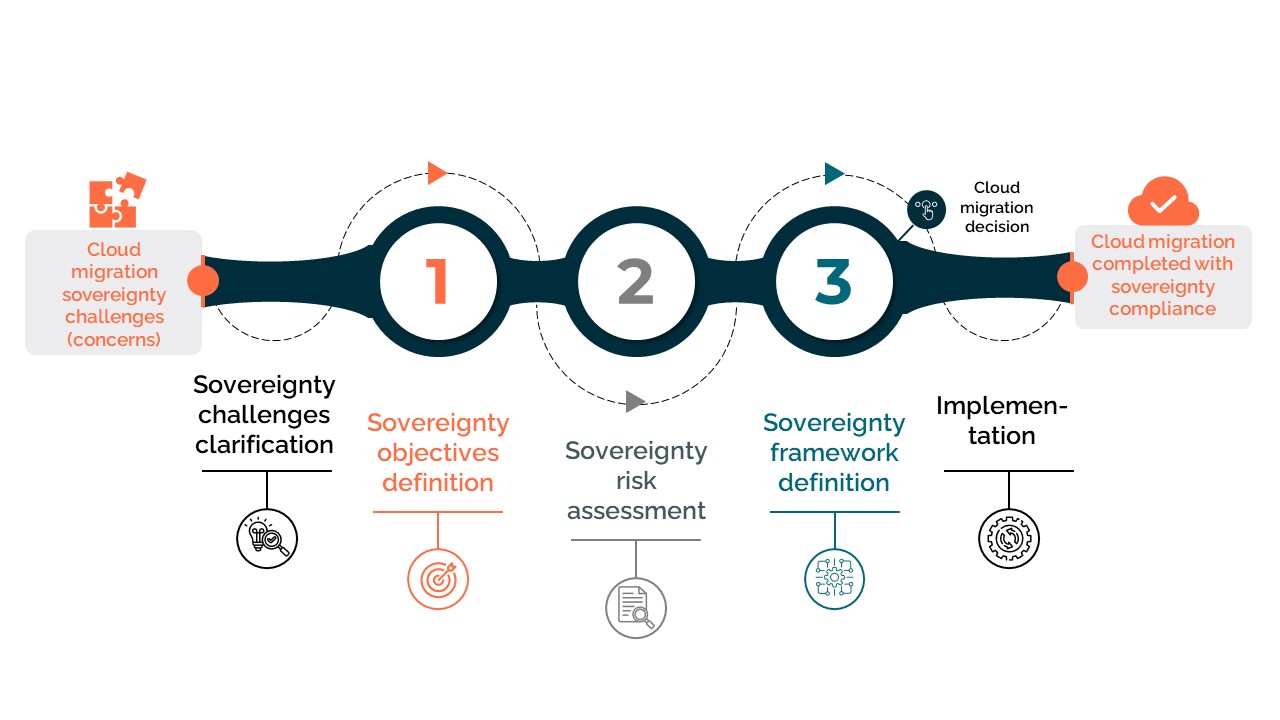

Smaller players will feel the chill too. If a company with Anthropic's resources is getting flagged, what hope does a seed-stage startup have of winning a defense contract? We're seeing a bifurcation of the AI world. There's the "commercial AI" used for writing emails and making art, and then there's "sovereign AI" that's vetted, scrubbed, and locked down for national security.

What Happens if the Lawsuit Fails

A failed legal challenge would be a disaster for Anthropic. It would cement the Pentagon's "risk" designation and likely trigger a sell-off or a down-round for the company. Investors hate uncertainty, and "potential national security threat" is the ultimate uncertainty.

We'd likely see Anthropic forced to undergo a massive internal audit or even divest from certain investors to appease the Department of Defense. This has happened before with companies like TikTok, though the stakes for an AI infrastructure company are arguably higher.

The most likely outcome is a settlement where Anthropic agrees to even stricter government oversight in exchange for the "risk" label being dropped. But getting to that point requires the company to show it's willing to go to war in court.

Steps for Tech Firms Watching This Case

If you're running a tech firm or investing in one, don't wait for a letter from the Pentagon. Audit your own supply chain now. This means looking at your cloud providers, your third-party API dependencies, and especially your investor list.

The "risk" label is the new "denied" stamp. You need to have a clear, documented trail of where your data comes from and who has access to your production environments. Anthropic's current mess proves that reputation doesn't matter when it comes to federal procurement—only hard evidence of security does.

Move your data to sovereign cloud instances if you plan on courting government clients. Secure your "Model Weights" with the same intensity you'd use for physical assets. The Pentagon's move against Anthropic shows that the era of "move fast and break things" in the AI sector is officially over when it comes to the public sector. Now, it's about moving slow, being compliant, and having a very good team of lawyers on speed dial.