Stop blaming the algorithm for a tragedy that has existed since the invention of the playground.

The "Blackout Challenge" isn't a new digital plague. It’s a rebranding of the "Choking Game," a dangerous behavior that predates TikTok, YouTube, and even the commercial internet. By framing this as a unique byproduct of social media, we are missing the structural rot in how we understand adolescent risk-taking and digital literacy. We are chasing ghosts while the real house is on fire.

The mainstream narrative is lazy. It suggests that a predatory "trend" simply appears, a child sees it, and a tragedy occurs. This linear logic ignores decades of sociological data on peer contagion and the "Werther Effect." When news outlets and frantic parent groups blast the specifics of these "challenges" across every screen in the country, they aren't warning people—they are providing a manual.

The Myth of the "Viral Trend"

Most of what the public identifies as a "viral challenge" is actually a localized event amplified by legacy media. In the case of the "Blackout Challenge," the behavior involves self-strangulation to achieve a brief euphoric state through oxygen deprivation.

Medical literature has tracked this since at least the 1990s. The CDC released a report in 2008—years before TikTok existed—identifying over 80 deaths related to the "choking game" between 1995 and 2007. The mechanics haven't changed. The only thing that changed is the scapegoat.

By labeling it a "TikTok Challenge," we give it a sense of novelty and mystery that appeals to the teenage brain’s search for sensation. We have turned a clinical behavioral issue into a dark, digital subculture.

The teenage brain is a high-performance engine with no brakes. The limbic system, which governs emotions and rewards, matures long before the prefrontal cortex, which handles impulse control. When you tell a teenager "Don't look at this specific, dangerous thing on the internet," you are essentially lighting a neon sign that says "High-Intensity Reward Found Here."

Algorithmic Accountability vs. Parental Absolution

It is easy to sue a tech giant. It is much harder to admit that we have outsourced the moral and physical supervision of our children to a 6-inch glass rectangle.

The "lazy consensus" dictates that TikTok’s algorithm is a malevolent force pushing death into the feeds of unsuspecting minors. In reality, algorithms are mirrors. They reflect engagement. If a user lingers on "edge" content, the machine provides more. This is a design flaw in terms of safety, yes, but it is not an editorial choice.

The industry insider truth? No platform wants its users to die. Dead users don't click ads. The engineers at these firms are constantly playing a game of Whac-A-Mole with hashtags, using "shadow-banning" and keyword redirects to hide self-harm content. But they are fighting human nature with math.

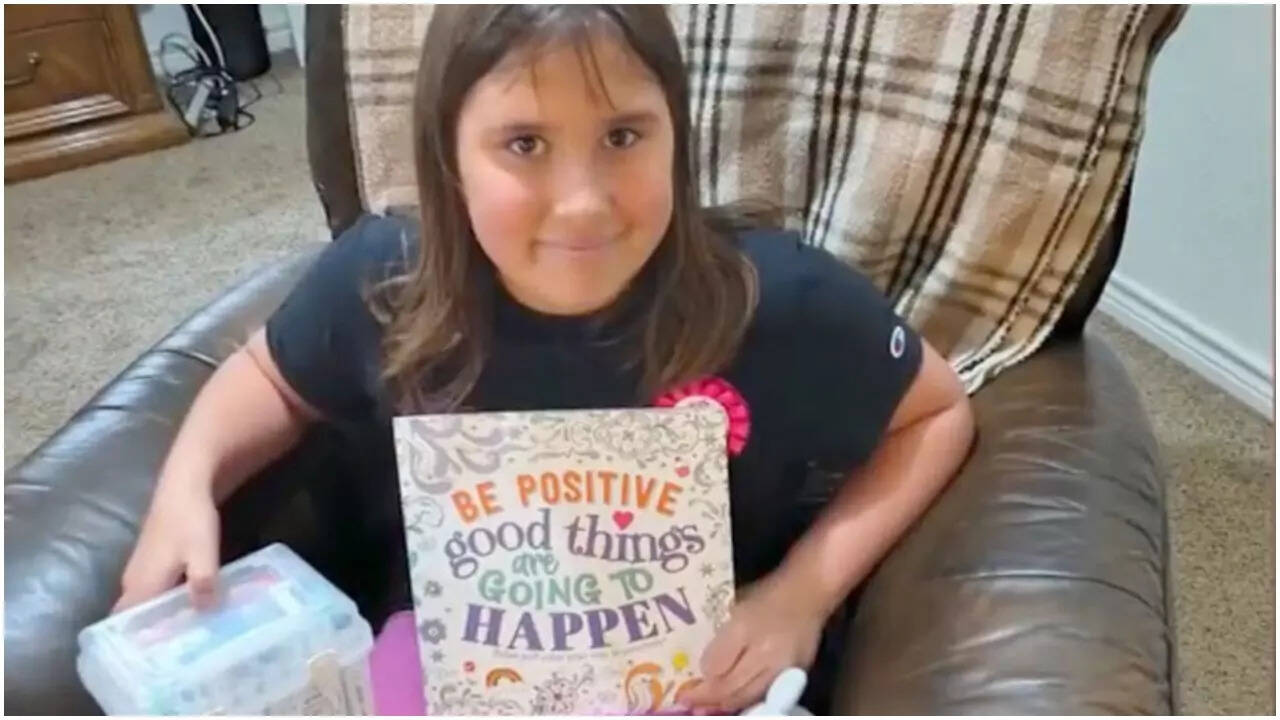

When a tragedy occurs, like the heart-wrenching loss of the young girl in Texas, the immediate reaction is to demand "better filters." This is a fundamental misunderstanding of how the internet works. You cannot filter for intent. A video of a child holding a belt can be a "get ready with me" fashion clip or a precursor to a tragedy. AI cannot yet distinguish the context of a household object until it is too late.

The Danger of Public Service Announcements

We need to talk about the "Awareness Paradox."

Every time a major news network runs a segment titled "What Parents Need to Know About the Blackout Challenge," they increase the search volume for that challenge by orders of magnitude. This is documented phenomenon.

Imagine a scenario where a specific bridge has a structural flaw. To warn people, the city puts up a massive billboard that says, "Do not jump off this bridge; it is 500 feet high and the fall is exhilarating but deadly." You haven't just warned people; you've advertised the location to every person currently experiencing a mental health crisis.

Mainstream reporting on the "Blackout Challenge" often includes:

- The name of the challenge (Searchable).

- The method used (Instructional).

- The "reward" or "feeling" associated with it (Incentivizing).

This isn't journalism; it's a contagion vector.

The Digital Literacy Lie

We talk about "digital literacy" as if it’s about knowing how to spot a phishing email. Real digital literacy is understanding the attention economy.

We need to teach children—and their parents—that their attention is a commodity being harvested. The "challenge" isn't a game; it's an engagement hack. The people posting these videos aren't your friends; they are influencers trading your safety for "likes."

The current approach to digital safety is reactive. We wait for a kid to die, then we ban a hashtag. This is like trying to stop a flood by catching raindrops with tweezers.

Instead of banning the tech, we should be deconstructing the social validation that makes these risks attractive. We need to stop treating the internet as a separate "realm" and start treating it as the high-risk environment it actually is. You wouldn't leave an eight-year-old alone in the middle of Times Square at 2:00 AM, yet we let them wander the digital equivalent every night behind closed doors.

The Cost of the "Safe" Internet

The push for total safety online usually results in two things:

- Mass Surveillance: Platforms are pressured to monitor every private message and upload, destroying any semblance of privacy.

- The "Walled Garden" Failure: Kids are savvy. When you make a platform "safe" (like YouTube Kids), they move to the platforms that aren't. They want to be where the adults are. They want to be where the "real" stuff is.

If we want to protect kids, we have to stop looking for a software update to solve a hardware problem in the human brain.

The "Blackout Challenge" is a tragedy, but the way we talk about it is a farce. We are using the deaths of children to fuel a moral panic that avoids the uncomfortable reality: we have built a society where children find more community in dangerous digital stunts than in their physical surroundings.

We have replaced supervision with "monitoring apps" and replaced conversation with "screen time limits."

Stop Monitoring and Start Disrupting

If you are a parent or an educator relying on a filter to keep your kids safe, you have already lost.

The most effective "firewall" isn't a piece of code; it's the removal of the incentive. We need to strip these challenges of their "cool" factor. We need to stop calling them "challenges"—which implies a test of strength or bravery—and start calling them what they are: biological accidents.

We need to stop demanding that tech companies be the parents. They are corporations. Their loyalty is to the shareholder, not your child’s prefrontal cortex.

The industry isn't going to save you. The "Status Quo" of blaming the app is a comfort blanket for a society that has forgotten how to engage with its youth.

Get off the app. Delete the account. Or, at the very least, stop acting surprised when a machine designed to maximize engagement eventually engages someone to death.

The problem isn't the challenge. The problem is the vacuum it filled.