The proliferation of Generative Adversarial Networks (GANs) and diffusion models has fundamentally altered the verification cycle of open-source intelligence (OSINT). In the context of the U.S.-Iran tension, the appearance of AI-generated satellite imagery does not merely create "fake news"; it introduces a high-frequency noise floor that threatens to paralyze state-level decision-making and trigger kinetic responses based on digital artifacts. This analysis deconstructs the structural vulnerabilities in the current information supply chain and identifies the three specific mechanisms—Verification Latency, Cognitive Anchoring, and Attribution Obfuscation—that make synthetic imagery a potent tool for asymmetric psychological warfare.

The Architecture of Visual Deception

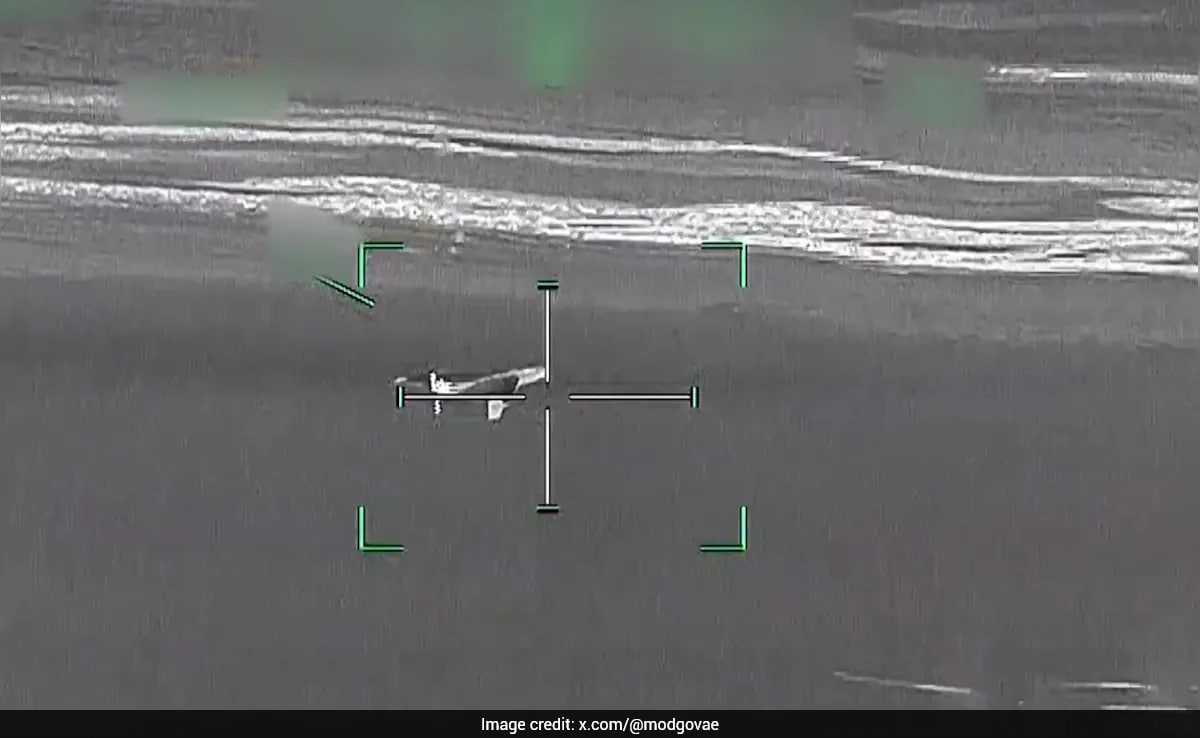

Synthetic satellite imagery operates at a higher level of danger than traditional "deepfakes" of human faces because it exploits the presumed objectivity of top-down, multi-spectral data. When an image purportedly shows a charred Iranian missile silo or an American carrier group in the Strait of Hormuz, the viewer assumes a level of technical barrier to entry that no longer exists.

Technical Parity and the Democratization of Sophistication

The barrier to generating convincing overhead imagery has collapsed due to two primary shifts:

- Transfer Learning on Public Datasets: Models trained on high-resolution commercial datasets (like Maxar or Planet Labs) can be fine-tuned with minimal compute to simulate specific geographic signatures.

- Texture Synthesis and Shadows: Modern diffusion models excel at recreating the specific grain, atmospheric haze, and shadow angles inherent in orbital photography, making manual "glitch hunting" increasingly unreliable.

The result is a "Truth Gap"—the time elapsed between the viral spread of a synthetic image and its technical debunking by specialists. In a conflict scenario, this gap is the window where escalation occurs.

The Three Pillars of Information Decay

To understand why these images are effective, we must categorize their impact through a structured framework.

1. Verification Latency as a Strategic Asset

The speed of social media dissemination exceeds the speed of forensic verification by a factor of roughly 100:1. While an AI can generate a convincing image of a bombed Iranian enrichment facility in seconds, an OSINT analyst requires hours or days to:

- Cross-reference historical satellite passes to verify terrain consistency.

- Analyze metadata (which is often stripped or faked).

- Coordinate with commercial providers for a fresh tasking of a satellite to the coordinates in question.

This latency creates a "Decision Vacuum." For a commander or a political leader, the pressure to respond to an apparent "first strike" shown on social media may override the protocol to wait for verified intelligence. The synthetic image serves as a catalyst for reactive, rather than strategic, movement.

2. Cognitive Anchoring and the Persistence of First Impressions

Human psychology dictates that the first piece of information received on a topic acts as an "anchor." Even if an image is later proven to be a fabrication, the emotional and cognitive shift it triggered—fear, aggression, or triumph—remains. In the U.S.-Iran context, a fake image of a destroyed naval vessel sets a narrative tone that subsequent retractions cannot fully erase. The "Liar’s Dividend" also applies here: as the public becomes aware that satellite images can be faked, they begin to doubt real evidence of Iranian or American military movements, leading to a total breakdown in shared reality.

3. Attribution Obfuscation

Unlike a state-issued press release, a leaked "satellite photo" posted by a burner account on X (formerly Twitter) provides plausible deniability. This allows state actors to test domestic and international reactions to potential escalations without taking formal responsibility. If the reaction is too volatile, the image is dismissed as "internet misinformation." If the reaction is favorable, it can be used to build a casus belli.

Quantifying the Cost of Verification

The financial and operational burden of debunking synthetic imagery is non-linear. The cost of generating a single fake image is essentially the cost of electricity for a GPU ($0.05 - $0.50). The cost of debunking that same image involves:

- Commercial Satellite Tasking: High-resolution "fresh" imagery can cost thousands of dollars per capture.

- Specialist Labor: Expert analysts billing $200+/hour.

- Opportunity Cost: The diversion of intelligence assets away from real threats to chase "ghosts" in the digital machine.

This creates an economic asymmetry where the "attacker" (the producer of misinformation) can bankrupt the "defender" (the verifier) through volume alone.

The Failure of Current Detection Frameworks

The reliance on "AI detectors" is a systemic vulnerability. These tools are inherently reactive; they are trained on known patterns of existing AI models. As soon as a new model architecture is released, the detectors fail until they are updated.

The Metadata Fallacy

Proponents of digital watermarking (such as C2PA) suggest that all "real" images will soon carry a cryptographic signature. However, in a conflict zone, the most vital images are often leaked or captured via secondary means (a photo of a screen, a compressed telegram upload), which strips this metadata. A lack of a watermark does not prove an image is fake, but its absence creates a "guilty until proven innocent" environment for legitimate whistleblowers or field reporters.

Structural Recommendations for Information Defense

A shift from "detection" to "resilience" is required to mitigate the risks of synthetic satellite data in the U.S.-Iran theater.

Implementation of Multi-Source Correlation (MSC)

The intelligence community and reputable news organizations must adopt a "Trifecta Verification" protocol before publication:

- Orbital Consistency: Matching terrain, vegetation, and shadow metrics against historical baselines.

- Social/Ground Correlation: Verifying that ground-level reports (seismic sensors, local social media, thermal anomalies) match the visual claims of the satellite image.

- Spectral Analysis: Checking for inconsistencies in non-visible light bands (Infrared, Synthetic Aperture Radar) which are significantly harder for current consumer-grade AI to fake convincingly.

Strategic Buffer Zones

Governments must establish a "De-escalation Protocol" specifically for unverified digital evidence. This involves a pre-agreed delay in public comment on visual "leaks" to allow for a 4-hour verification window. By formalizing this delay, the tactical advantage of Verification Latency is neutralized.

The ultimate risk is not that we will believe a lie, but that we will become unable to act upon the truth. The U.S.-Iran conflict serves as the primary laboratory for this new era of visual warfare. The strategy must move beyond the search for "glitches" and toward a hardened infrastructure of cross-platform data validation. The next phase of this evolution will see GANs integrated with real-time weather data to create "perfect" fakes; at that point, the only defense is a robust, multi-modal intelligence net that treats a single image as a hypothesis, never as a fact.