The transition from human-centric target acquisition to AI-augmented kinetic planning marks a fundamental shift in the entropy of modern warfare. In the context of recent U.S. strikes against Iranian-backed assets, the deployment of computer vision and machine learning models is not merely an incremental speed improvement; it is a structural re-engineering of the Kill Chain. By offloading the cognitive burden of data synthesis to non-biological systems, the military aims to compress the "Sensor-to-Shooter" timeline, though this compression introduces systemic risks involving verification bottlenecks and the erosion of human oversight.

The Triad of Algorithmic Air Operations

To understand how AI functions in current Middle Eastern theaters, one must look past the popularized notion of "autonomous robots." The system operates through three distinct functional layers:

- The Ingestion Layer: Aggregating unstructured data from MQ-9 Reaper feeds, synthetic aperture radar (SAR), and signals intelligence (SIGINT).

- The Inference Engine: Utilizing computer vision to identify anomalies—such as a specific mobile missile launcher—against a high-clutter desert or urban background.

- The Optimization Layer: Recommending the specific platform (F-15E, B-1B, or UAV) and munition type to achieve the desired probability of destruction ($P_k$) while minimizing the predicted collateral damage ($C_d$).

This framework moves the military from Heuristic Targeting, which relies on the intuition and fatigue-prone eyes of intelligence officers, to Probabilistic Targeting, where every potential strike is assigned a confidence score based on historical data patterns.

The Latency Paradox in High-Stakes Targeting

The primary driver for integrating AI into the planning of strikes against Iranian-backed militias is the volatility of the targets. Unlike fixed bunkers, these groups frequently utilize "shoot-and-scoot" tactics, where the window of opportunity to strike a logistics hub or a rocket array may exist for only minutes.

In a traditional manual cycle, the time required to cross-reference satellite imagery with ground intelligence creates Decision Latency. If the latency exceeds the target's dwell time, the intelligence is discarded as "stale." AI serves as a latency-reduction mechanism. By processing thousands of hours of video in parallel, the algorithm flags high-value targets for a human "in-the-loop" to verify, theoretically ensuring that the decision to fire occurs within the target's static window.

However, this speed introduces the Verification Bottleneck. As the AI identifies more targets at a faster rate, the human analysts responsible for legal and ethical sign-off become the narrowest point in the system. If the analyst spends only seconds reviewing an AI-generated recommendation to keep pace with the machine, the "human-in-the-loop" becomes a "human-on-the-loop"—a passive observer rather than a critical check.

Quantifying the Error Rate: The Ghost in the Machine

The effectiveness of these AI systems is governed by the trade-off between Precision and Recall.

- High Recall Strategy: The system flags every possible vehicle that looks like a rocket launcher. This ensures no targets are missed but results in a high number of False Positives (civilian trucks identified as threats).

- High Precision Strategy: The system only flags targets when it is 99% certain. This reduces collateral damage risk but results in False Negatives, allowing hostile actors to reposition undetected.

In the strikes conducted across Iraq, Syria, and Yemen, the U.S. military utilizes models trained on decades of CENTCOM imagery. Yet, these models face the "Out-of-Distribution" problem. If a militia uses a new type of camouflage or a civilian vehicle modification the AI hasn't seen, the model’s confidence scores become unreliable. This is not a software bug; it is a mathematical limit of pattern matching.

The Cost Function of Algorithmic Warfare

The shift to AI-assisted planning is also an economic necessity. The cost of maintaining a 24/7 human-led "Eyes on Target" capability for every square mile of the Middle East is fiscally and logistically unsustainable.

$$Total Cost = (N \times C_h) + (T \times C_k)$$

Where $N$ is the number of analysts, $C_h$ is the cost per analyst, $T$ is the number of targets, and $C_k$ is the kinetic cost per strike. By implementing AI, the military seeks to keep $N$ static while exponentially increasing $T$.

The risk is that $C_k$ increases due to "Algorithm Bias." If the AI identifies targets that a human would have deemed insignificant, the military may find itself in an escalatory spiral, striking more frequently and drawing more retaliation, simply because the machine provided the opportunity. This creates a feedback loop where the ease of targeting dictates the tempo of the conflict, rather than the strategic objectives of the state.

Legislative Frictions and the Oversight Gap

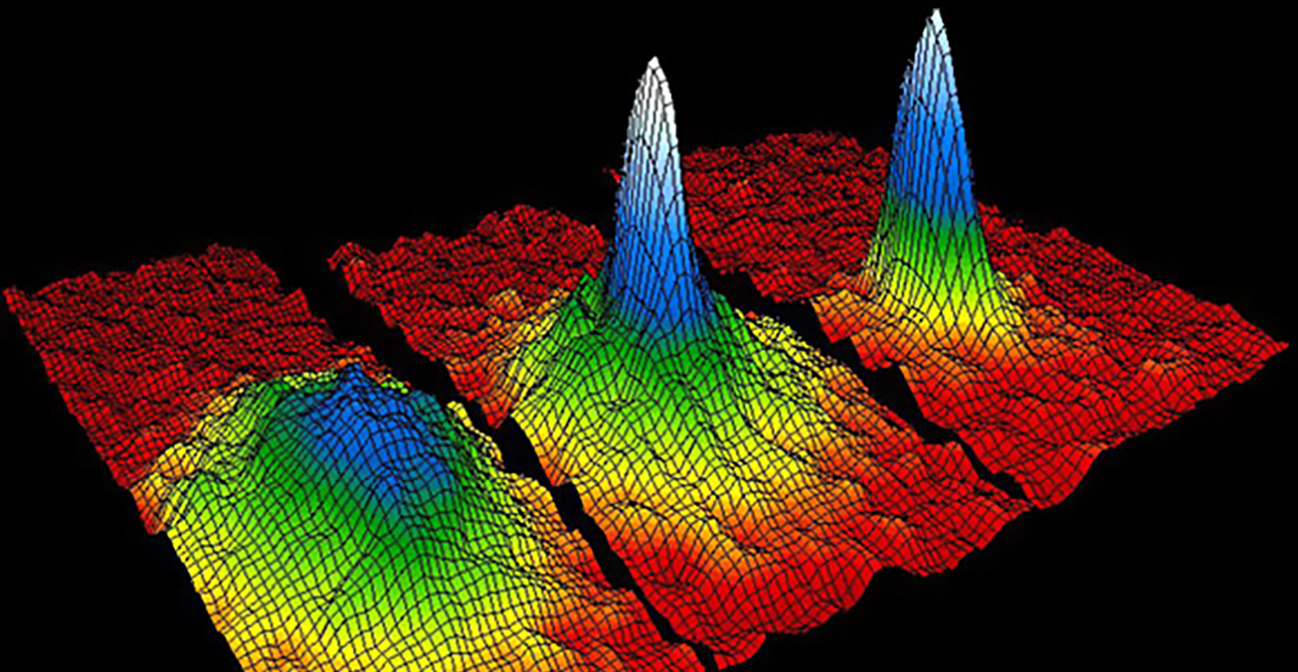

The call for oversight from lawmakers stems from the opacity of the "Black Box" problem. When an AI system recommends a strike, it does not provide a narrative explanation. It provides a heat map of pixels and a probability percentage.

Members of the House and Senate Armed Services Committees are concerned that the current legal framework—largely built around the 2001 Authorization for Use of Military Force (AUMF)—does not account for Distributed Responsibility. If an AI-assisted strike results in significant civilian casualties, the failure could lie in:

- The training data (biased or incomplete).

- The sensor hardware (low resolution causing misclassification).

- The human operator (over-trusting the machine's recommendation, known as "Automation Bias").

The lack of a standardized audit trail for AI-derived targeting data means that accountability is diffused across software engineers, data scientists, and commanding officers.

Tactical Vulnerabilities: Counter-AI Operations

As the U.S. increases its reliance on these models, adversaries like Iran and its proxies are incentivized to engage in Adversarial Machine Learning. This involves tactical deception designed to "fool" the AI's vision models.

Tactics include:

- Pixel Perturbation: Using specific paint patterns on vehicles that disrupt the edge-detection algorithms of computer vision.

- Decoy Proliferation: Deploying low-cost, 3D-printed inflatable decoys that mimic the thermal and visual signatures of high-value assets, forcing the AI to waste its processing power (or the military to waste expensive munitions).

- Environmental Masking: Moving assets specifically during weather conditions or times of day where the AI’s training data is known to be thin.

The reliance on AI creates a new surface area for electronic warfare. If an adversary can jam the data link that sends Reaper feeds to the processing cloud, the "smart" kill chain reverts to a "dumb" one, potentially leaving forces blinded in a critical moment.

The Strategic Shift from Intelligence to Prediction

The ultimate goal of this technological integration is the move from reactive intelligence to Predictive Maneuver. By analyzing historical movement patterns of Iranian-backed groups, the AI attempts to predict where a mobile launcher will be in thirty minutes, rather than where it was ten minutes ago.

This predictive capability is the "Holy Grail" of theater command, but it rests on the assumption that human behavior in war is a solvable equation. War is inherently a non-linear system. While AI can predict the most efficient logistics route for a truck, it cannot predict the political will of a commander or the psychological impact of a strike on a local population.

The technical superiority of the U.S. AI stack does not inherently translate to a strategic victory. A more efficient kill chain may simply result in more efficient tactical successes that lead to a wider, more fragmented conflict.

Strategic Recommendation for Defense Stakeholders

The military must move away from the pursuit of "Full Autonomy" and instead invest in Explainable AI (XAI). The current systems are too opaque for high-stakes geopolitical environments where a single misidentified target can trigger a regional war.

- Red-Teaming the Model: Implement mandatory "Adversarial Audits" where a dedicated team attempts to trick the targeting AI using local camouflage techniques before the model is deployed in a specific theater.

- Dynamic Thresholding: Adjust the confidence intervals required for a strike based on the proximity to civilian infrastructure. In a remote desert, the AI might operate at an 85% confidence threshold; in an urban center like Baghdad or Sana'a, that threshold must be hard-coded to 99.9%.

- Human-Centric Design: Re-engineer the analyst interface to highlight why the AI flagged a target (e.g., "identified 90% match for 122mm rocket tube") rather than just presenting a "Strike/No-Strike" prompt.

The immediate priority should be the creation of a "Digital Black Box" for every AI-assisted strike. This ledger would record the model version, the training data used, the sensor inputs at the time of the strike, and the human operator's response time. Only through this level of granular data can the military provide the oversight that lawmakers demand and the precision that modern warfare requires.

The path forward is not found in more data, but in better-structured skepticism of the data we already have.